We Have Stopped Developing Software.

Developers have stopped writing code and started training developer models. A mental model for the brute-Dev era: programming by examples, edge cases, and the Three Zones of Supervision.

In this article

In my previous post, we discussed how AI is essentially a new communication technology, more than an intelligence technology. I got some positive and challenging critique, like I always do when I use this notion. However, "what is intelligence" (the software of the evolution process) is a nice philosophical question. In this article, I would like to focus on three things:

- The core of the AI learning / training process.

- The change LLMs have introduced.

- The implications of that on software development.

AI: A new type of programming

Programming is the way we make machines follow a certain logic when processing data: rules for the expected output (data) given an input (data). Whether the input is invoices and the output is a tax report, or the input is location and the output is a navigation route, all programs follow the same principle:

Input data + Logic (code) = Output data

In this world, we as humans communicate information to the machine through code. Given an input, we place a deterministic logic (a piece of code, a feature) to produce the expected output. AI (deep learning) has changed the way we apply this equation, and from 2012 we have entered the era of "programming by examples".

Programming by example

We have transformed the programming equation of:

Input data + Logic (code) = Output data

to:

Input data + Output data = Logic (code)

We no longer feed the logic into machines. We collect input-output examples, feed them to the machine, and the machine produces the logic, the code. All we are left with is choosing the right examples and operating the learning machine - The deep learning era has started.

Deep learning ignited the first modern AI era, and it did so fast. The first AlexNet deep learning model won all computer vision benchmarks in 2012, and by 2013 it was hard to find a computer vision paper that was not based on deep learning. An industry was born: the industry of generating examples for machines to learn from, input and output pairs. We stopped trying to figure out nature's rules and started copying them bluntly, This is why I chose to focus Dataloop on labeling and data management - While training got all the spotlight, the real magic happens in organizing and labeling the data. This is the actual place where AI system designers get to program the AI model's behavior (in professional terms, labels are compression of the data into a lossy alphabet - labels are the essence of the data).

Deep learning was successful mainly on computer vision (sparking the first AI venture wave, with autonomous driving as the leading use case). The ability of the same model to work well on different tasks was limited to computer vision tasks but don't get it wrong, the fact a single model can perform so many tasks is nothing but a magic, just like chat felt 3 years ago and agentic coding feels today. That was correct until 2017, when transformers were introduced and GPT-1 was born (2018), suddenly the magic has spread into textual data, data with much more complex and abstract patterns.

With text models (now called language models), a new door started to open. As the models improved, the complexity of the examples that the models could handle leaped:

2015 example: "Labeled cat"

2023 example: "Solve this differential equation, step by step"

So summarizing the above:

- Information flows into machines from humans through programming.

- We have switched from programming rules to feeding examples and letting machines "guess the rules".

- Data complexity went up from "Cat vs. Dog" to "Build me an app".

Now switch programming with teaching and keep it simple, yet often mis-regarded - We (humans) have been and keep teaching the machines, we just switched from explaining the rules to showing examples of them. This is important, since any notion, plan or strategy that assumes the humans will be out of the loop is destined to fail, Dark factories included - and don't get me wrong, dark factory pattern has a lot of truth in it, just not the whole truth.

"I am not writing code anymore"

Of course you don't, you have started feeding the machine with examples, manually training your model one example after the other and oh boy, descending of that gradient is exhausting, we need a better way to train our software models.

The complexity of the data that language models can handle keeps rising, following a trend known as "the scaling law": the more data and compute you throw on a (larger) model, the better the result. So models have kept growing significantly, demanding more compute, more data. And the result? The complexity of the data they can handle with success keeps rising, with coding becoming the leading use case, but we are all trying to figure out how to change 60 years old habits, but it all changes fast, faster than we can handle.

In the beginning, the change to a professional coding routine was almost unnoticeable: just autocomplete to code inside the code editor. But that autocomplete has quickly turned into complete auto-development of apps (vibe coding) and is now transitioning into agentic engineering and workflows, where AI agents are completing coding tasks completely autonomously. The machines can now handle more and more complex tasks, involving complexity-growing data patterns.

That journey felt and still does feel like magic, with models that are increasingly capable of taking on tasks that were not possible just a while ago, for no effort and for a fraction of the cost. Or at least, that's how it looks at first sight.

But under the hood, something much more fundamental and bigger is happening. Developers have stopped developing applications and started building a new layer of abstraction, Langware, usually described with simple text files called markdowns. These documents are creating a layer on top of the product that is responsible for developing the product.

But the Langware is much more than instructions on how to build the product, the Langware is the instructions on how to run the marketing, support and operations, we are now coding the entire company, not just the product and everybody is a programmer. And the software? We are no longer writing software or products. We are building the model that does it for us. That model is crafted for our specific product, organization, and operations. It runs on top of LLMs in the form of agents and skills that are coding, maintaining, enhancing, supporting, and selling the product, with our supervision. We are creating a reflection of our organization into markdown files.

Welcome to the brute-Dev era

In computer science, brute-force is when you try to guess a solution until you get a good guess. Bitcoin, for example, is designed around brute-force search of numbers.

One of the first things you learn as a computer science student is that brute-force in all of its flavors is bad. It's the admission that you have failed to find a smart solution and moved to shooting in the dark. You found the final answer, but you have no way of showing why, or to improve for the next time. AI modeling, all AI models, are essentially a brute-force activity. We don't know why the neural net has decided this is a cat. We just fed it enough examples of cats so it now correlates "cat-like thing" with the word cat. Internally, the network will have many duplicated and overlapping routes to detect cat. We don't care, as long as the answer is right. In the machine learning world this is called overfitting, and the AI industry is an overfitting industry by its nature. That includes your ChatGPT or Claude Code: just ask it "how many r's in strabery" (keep the typo).

Saying we don't care is probably correct as long as we're trying to detect cats in the deep learning experiment, but when it comes to medical diagnosis, car accidents, or insurance claims, the answer is very different. The AI industry has been struggling with this issue for more than a decade with very limited success at generalizing, and more "case by case" patching. Given the fact that self-driving cars are still not broadly available, the problem is clearly not simple to solve.

And what is the problem in two simple words? Edge cases.

Edge cases

Often when you ask for an effort estimation, whether for software or for renovating your house, it will take longer and be more expensive than originally planned. Why? It is very easy for us to estimate the high-level effort, it's the small details we ignore and accumulate to significant effort. The final product always has a lot of small moving parts in it, requiring attention and effort. Saying your Gmail is for sending and receiving emails covers 80% of its goal; how to connect it to OpenClaw is just a tiny fraction of the other 20%.

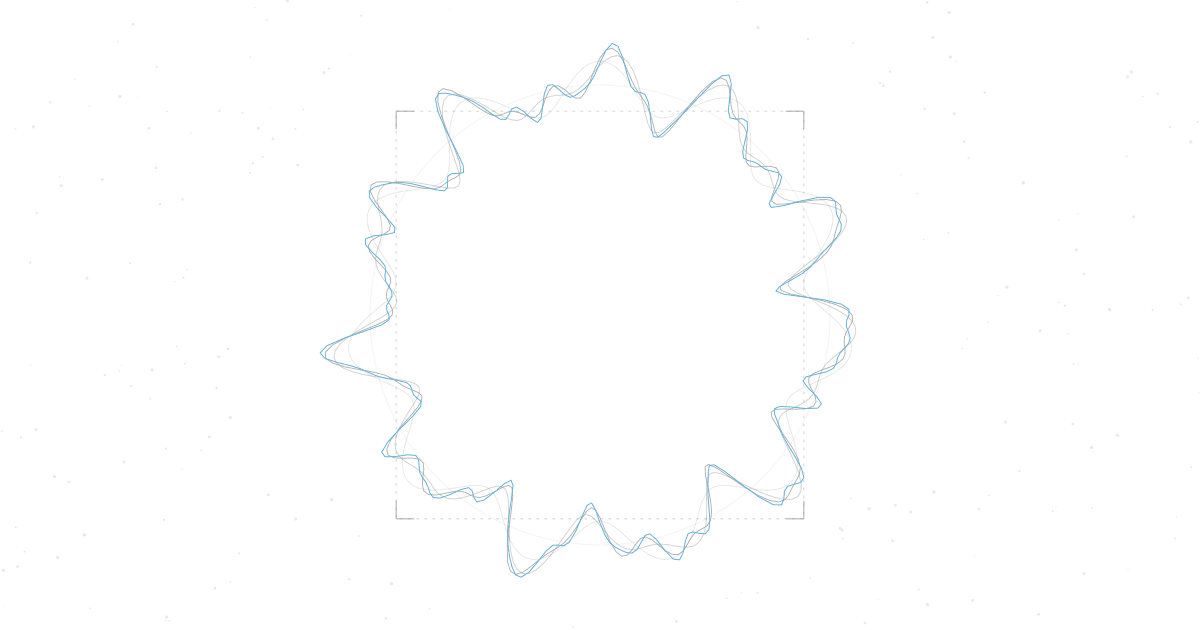

This is nature's way, and here is an intuitive visual to explain why:

We tend to estimate effort by looking at the higher-level picture, the area, but the actual effort requires us to solve the perimeter, the corner cases of our product:

- Providers / tech stack do not supply the services or APIs as we planned.

- Users do not work the way we imagined they will.

- Cost is too high, performance is unacceptable.

- Security and compliance force changes.

- Many, many bugs.

- Technical debt.

- Legacy support and backward compatibility.

And here we are at ground zero of this new development-era pain: most of the software effort is around the edges, and AI is very bad at the edges. AI solves the 80% fast, the first and easy 80%:

How do we go from here?

Accept it: within boundaries

The first thing we need to do is embrace the brute-force. Don't try to resist it as a core principle. Apply the lessons we have from the machine learning world into the software development world. That means:

- We collect a lot of data examples and run a lot of compute.

- The examples we collect need to be unique and cover many aspects.

- In order to reach a 100% pass rate, we need to overfit.

- The AI-generated code will behave like a neural network, containing many paths and duplications, and will be wet code. This is now legit code.

Yet a true win happens when you allow AI to take full control where it's possible, forbid it from touching dangerous areas without pre-approval, and supervise the middle. I call it The 3 Zones of Supervision (3ZS, you may need to adjust the list to your product and domain):

Getting started with product training

We are all going through a major experiment where we try to invent the methodology while applying it and using it, and doing it while the floor below moves rapidly. In such an environment, it's about doing our best and I am experimenting daily, keeping what works and discarding what's not. A solid mental model and approach can help create a more stable working environment and delivery cadence. Even if you get something wrong, have a system that learns from mistakes. Here are some of our lessons, painfully learned:

- Create a lot of scenarios and tests, run them with high frequency. Tests and compute are cheap; working with agents makes them critical.

- Create the "No agent allowed" layer. Today the easiest way is by file or folder names. Do it by review as a first start, and later you can automate with rules - I failed to stabilize such system automatically, folder tracking is pragmatic and will get you the 80%.

- Security-first approach. Security was hard before agents and just became harder. Your security layer should be handled and maintained centrally, as the most fundamental layer. Security is the heart of "your app core", and your app core is closed to agents.

- The core: have devs supply clear rules, libraries, and APIs. Everybody now codes and automates; supply the infrastructure for the citizen developers, all in a central way.

- What is core? TDD. Define the first-principles objects, entities, actions, and functions of your product. Make sure you have an internal SDK (iSDK) separating the core from the "feature". Agents and non-devs are iSDK users. Empower them to build.

- However you define your zones today, it will be different tomorrow. You constantly need to identify duplications, errors, and repeating bugs and issues. These usually suggest something is missing in your iSDK, or in how it's being served to agents.

It was 2016, I was interviewing experts on deep learning as part of validating the concepts of Dataloop and I met a famous university professor and pitched her the amazing new era we are entering, machines can see! It is all solved now using data and labeling factories and I remember the surprising answer:

“This is a sad world. It's our craftsmanship and you are taking it, making it a dark and cold factory. Even if you are right, this is not for me.”

AI has now come to my craftsmanship, yet I am optimistic - the end of one era is the beginning of a new one, magical one.

As I have already mentioned, information theory is my guiding principle on AI, and modeling it as a noisy communication channel is very useful and pragmatic. We are trying to pass information into a model. The model is an information container, and with enough information in it, it can perform useful tasks. Read more about this here. Or book a demo and we can discuss this in person.

Written by The Architect

Eran Shlomo

Cofounder & CEO of Langware Labs. Writes about AI strategy, enterprise technology, and the technical architecture behind AI coding tools.