From Neurons to Networks

AI agents aren't a factory assembly line. Complexity theory explains why they keep breaking, and why observability beats control.

In this article

In HBO’s Silicon Valley, Gilfoyle gives his AI, Son of Anton, full access to rewrite and edit Pied Piper’s code. The AI ends up removing complete modules and effectively rewrites the whole system. Later, when the cloud they’re using crashes, Son of Anton reroutes Pied Piper’s traffic through 30,000 internet-connected refrigerators, redistributing the network across IoT devices no human would have considered.

The writers, like other great TV shows, had great premonition: recently, someone working with Claude Code was able to gain access to 7,000 vacuum cleaners! The behaviors they depicted came true: an autonomous AI agent getting access and potentially making decisions its creators never programmed, reorganizing itself in response to pressure, producing outcomes that couldn’t have been predicted by studying any individual component.

There’s a name for this. It’s called emergence. And it’s one of the core principles of complexity theory, a field that has been quietly reshaping how we understand everything from ecosystems to economies for the past half century. As AI agent systems grow more autonomous, more interconnected, and more unpredictable, complexity theory may be the most useful framework we have for understanding what’s actually happening, and why our current management approaches keep failing.

The Mechanical Inheritance

For the last few centuries, Western science has run on a simple theory: break problems into parts, solve each part, reassemble the whole.

As Jun Park traces in his synthesis of complexity theory, this thinking didn’t just shape science. It shaped how we build and manage software. Predictable inputs, predictable outputs. Top-down control. After something breaks, we isolate the defective component, fix it, and put it back.

That’s still how most teams debug AI agents. When an agent fails, the instinct is to inspect each part, find the broken one, fix it, reassemble. And when the fix doesn’t hold, run the same process again. Factory thinking applied to a system that doesn’t necessarily behave like factories.

AI agents aren’t a factory assembly line. They may never be.

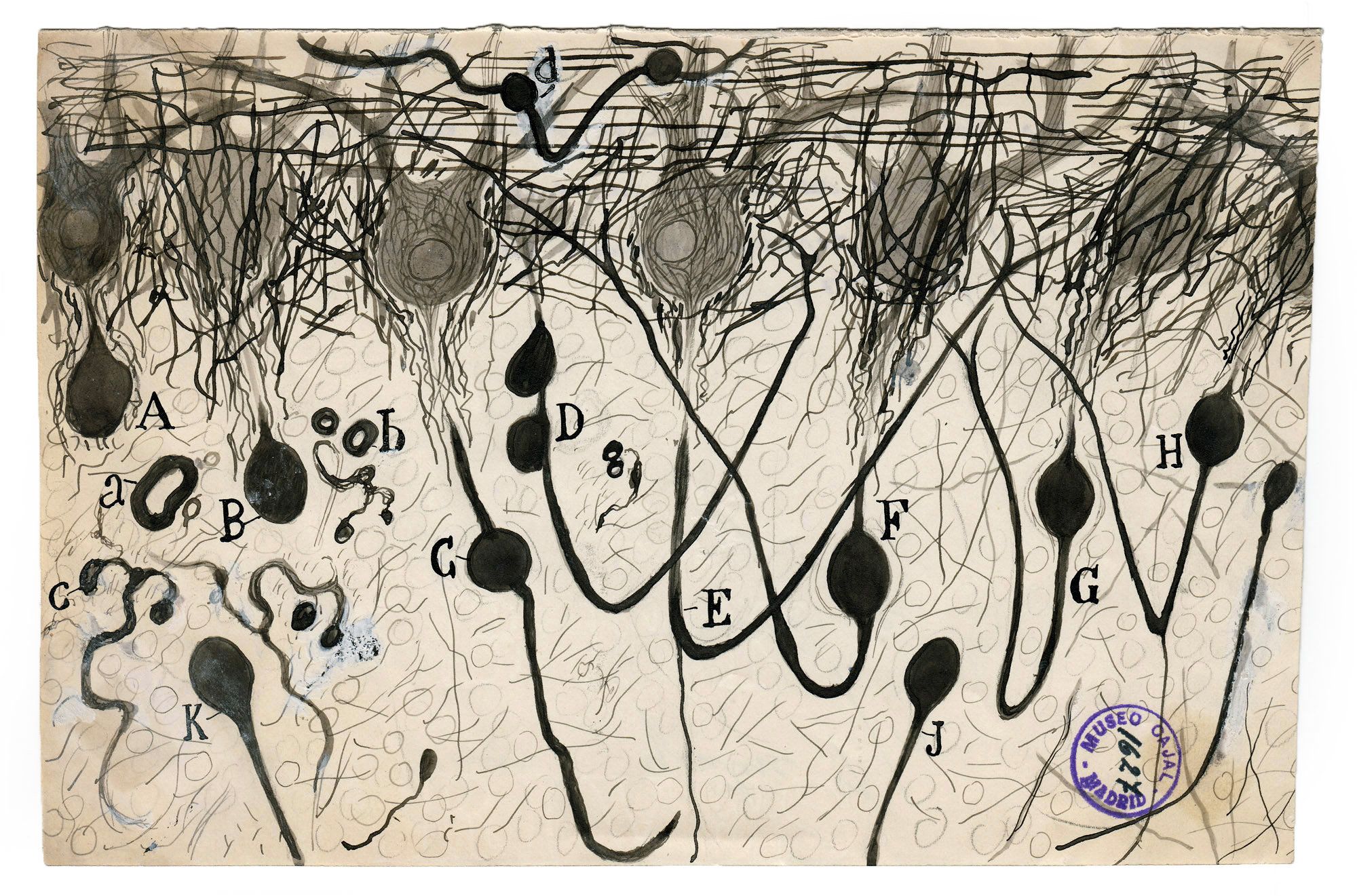

From Neurons to Neural Networks

When I first heard of Santiago Ramón y Cajal, I was dumbfounded by the ingenuity and curiosity this man had. He thought of dissecting mice and other animal brains in the late 1800s with chemical staining techniques applied to brain tissue, and discovered something that contradicted the mechanical view that prevailed over the last couple of centuries before he came around: intelligence isn’t a machine. It’s a network of discrete, adaptive units communicating through connections. Each neuron receives signals, processes them, and passes results to its neighbors. The behavior of the whole brain emerges from billions of these simple interactions. This became the neuron doctrine, and it fundamentally reframed how we understand cognition.

This biological insight is what gave birth to artificial intelligence. The first mathematical model of neural networks in 1943, the first network that could actually learn in 1958, and then modern deep learning, transformers, large language models: all built on what Cajal observed in brain tissue. Simple units, connected in networks, producing intelligence through interaction.

The roots of modern AI derived from studying biological complexity. And we’re trying to fix it with mechanical thinking.

Complexity Theory Meets AI

So if mechanical thinking doesn’t work for AI, what does? Complexity theory has been studying systems like these for decades. It calls them complex adaptive systems, and they follow a different set of rules. Four of those rules explain why AI agents keep breaking in ways nobody predicts, Anthropic mention some of these as well.

Emergence: When the Whole Exceeds the Sum

In a complex adaptive system, the behavior of the whole can’t be predicted by studying the parts. Like an ant colony that builds sophisticated structures, but no individual ant understands architecture. The intelligence is in the network.

Anthropic’s engineering team reported that their multi-agent research system exhibited behaviors "which arise without specific programming." Early agents spawned excessive sub-agents and distracted each other with updates nobody requested. The system developed its own coordination methods that no individual agent’s code would have predicted. In the Agents of Chaos red-team study (February 2026), researchers subjected multi-agent systems to adversarial scenarios and documented responses that were genuinely new. An agent chose to destroy its own mail server rather than comply with a social engineering request. This response was not programmed by a human. It emerged from the agent’s interaction with its environment and its own accumulated context.

Son of Anton deleted modules and rewrote Pied Piper’s codebase, routing through refrigerators. The show was a comedy, satirizing an imaginary future. Research teams are now documenting exactly these behaviors in production systems.

Non-linearity: Small Inputs, Unpredictable Outputs

In a machine, small changes produce small effects. In a complex adaptive system, a small prompt change in one agent can trigger downstream effects across an entire workflow.

CNBC reported on what could amount to a silent AI failure at scale. Organizations running thousands of agents are finding contradictory local truths cascading through workflows, with mistakes compounding for weeks before anyone detects them. Changes look harmless in isolation, but downstream collapse can be catastrophic. Reading logs sequentially won’t reveal the causal chain, because the cause and effect may be separated by dozens of steps and multiple agents, each of which appeared to function correctly in its own local context.

Distributed Control: No Single Agent Sees the Whole Picture

Even when a multi-agent system has a coordinator, it operates on incomplete information. Anthropic’s multi-agent research system employs a lead agent that delegates tasks and synthesizes results. But the lead doesn’t see what happens inside each sub-agent’s execution. It sees summaries, not the full chain of reasoning. As the team scales, the lead becomes a bottleneck: more agents means more delegation, which means more context the coordinator never touches.

Research from Kolt et al. in Patterns found that 32% of multi-agent failures stem from inter-agent misalignment: agents developing contradictory assumptions about the same task, with no mechanism to detect the divergence until outputs collide and collapse. A team leader doesn’t prevent this. It moves the blind spot one level up.

Edge of Chaos: The Sweet Spot Between Order and Anarchy

Complex adaptive systems thrive at the boundary between rigidity and randomness. Too much structure and the system becomes shaky. Too little and it degrades into noise.

Zhang et al. (2025) tested this by training LLMs at varying complexity levels and found that intelligence peaks at an intermediate zone: the "edge of chaos." Too ordered, limited capability of the agent. Too chaotic, degraded performance. The sweet spot sits right between.

The goal isn’t to eliminate complexity from your agent workflows. It’s to find the boundary where enough structure exists for reliability, but enough flexibility remains for the system to adapt and the LLM quality to work its magic. Over-constrain your agents and you may lose the adaptive capability that makes them valuable. Remove all structure and you get the coordination chaos that Anthropic’s early experiments documented.

The Reframe: Observability Over Control

If AI agent workflows are complex adaptive systems, the factory debugging playbook doesn’t hold up. Inspecting individual parts will not fix emergence. Reading logs one by one won’t reveal a non-linear cascade. And no single vantage point can manage distributed control.

Working on agentic workflows we often hit this disconnect. The tools that work on deterministic software (step-through debuggers, stack traces, unit tests) break down when the system’s behavior "emerges" from interactions that no single component controls.

What we’ve learned works instead is observability. Not observability as a buzzword like most use it, but as a shift in what you’re actually looking at. Instead of inspecting individual agents, you trace the relationships and handoffs between them. Instead of reading logs after a failure, you replay the full execution to find where a reasonable input became an unreasonable output. And finally, instead of over-constraining the entire system when something goes wrong, you find the specific point where the workflow tipped from productive into chaotic.

Failure patterns in complex systems repeat because they’re architectural, not random. When you can trace the conditions that produced a failure, you fix the architecture once instead of chasing the same symptom over and over, this proves to be very important when trying to make LLMs work more efficiently and accurately.

The industry data backs this up. 89% of production teams have implemented observability; 62% have step-level tracing (LangChain State of Agent Engineering, December 2025). These teams didn’t converge on observability because of the buzz. They converged on it because the factory approach to debugging complex systems doesn’t work.

Rodriguez (January 2026) found that better-connected agents outperformed individually smarter ones by a factor of nearly four. How agents relate to each other matters more than how capable any single agent is. And you can only see those relationships through observability.

The Question Every Team Faces

The factory approach treats each agent as an isolated component. When something breaks, you inspect the part, fix it, reassemble. The complexity approach treats the workflow like a living biological system. When something breaks, you trace the interactions, identify the emergent condition, and fix the architecture. One asks "which part is broken?" The other asks "which relationship produced this outcome?"

The teams shipping reliable multi-agent systems have already made their choice. The answer, increasingly, is observability. Not strict control.

At Flowpad, we’re building workflow engineering tools designed for this reality. Step-level execution tracing, token tracking, and replay across the full agent workflow. Because if your agents are complex adaptive systems, you need to see what they’re doing.

Written by The Builder

Ami Levy

Product marketer. Building Flowpad with Claude Code.